Merry Christmas and a Happy New Year! This article is going to cover an easy yet versatile method of connecting a web form, with user input, to a SQL Server database, with the aim being to successfully move information input by the user to a structured set of tables in the database. This is a requirement of many website designers who need to capture user input for processing, to capture data such as contact information, etc. There are many methods of doing this, and this article is doing to be using the following technologies:

- HTML 4.0 and above

- .NET Framework 4.0

- Visual Studio 2005 (or Notepad, up to you!)

- ASP.NET using the Visual Basic (VB.NET) language

- Microsoft Internet Information Services on Windows 7

- Microsoft SQL Server 2008 R2 Express Edition

A quick disclaimer - the code shown below may or may not work for you and I accept no responsibility howsoever caused by the use of my code on systems not under my control either directly or indirectly. OK? :-)

Also if you're cutting/pasting remember to adjust the code indentations and line breaks as I suspect Google will mangle my formatting when I post. VB code line break character is _ and HTML/SQL doesn't need one in most cases (although it's good practice). To aid readability - SQL comment character is --, VB is '. Code is in Courier New, text in Calibri.

Firstly, I'll cover creating a (very!) basic web form to allow user input. Then, I'll show you how to con your home PC/laptop into thinking it's a web server. Next, I'll create an .ASPX page with VB code to handle the input. I'll go through creating some data validation functions too. Then, I'll cover creating a connection using ADO (ActiveX Directory Objects, part of the COM objects in Windows) to SQL Server. After, I'll cover a couple of methods of sending data to the database (and some ideas on retrieving it, too). Finally, I'll go through some ideas on improvements and other considerations such as security, encryption and client-side validation.

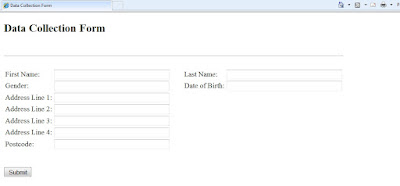

Okay, firstly let's define a new HTML page to capture the information. We'll be capturing a user's name, address and telephone number, date of birth and gender. Create a HTML page like the following:

<!DOCTYPE html PUBLIC "-//W3C//DTD XHTML 1.0 Transitional//EN" "http://www.w3.org/TR/xhtml1/DTD/xhtml1-transitional.dtd">

<html xmlns="http://www.w3.org/1999/xhtml" >

<head>

<title>Data Collection Form</title>

</head>

<body>

<form name = "form1" action="send.aspx" method="POST">

<h2>Data Collection Form</h2>

<br><br>

<table>

<tr>

<td>First Name:</td>

<td><input type="text" name="firstName" size="40" /></td>

<td> </td>

<td>Last Name:</td>

<td><input type="text" name="lastName" size="40" /></td>

</tr><tr>

<td>Gender:</td>

<td><input type="text" name="gender" size="40" /></td>

<td> </td>

<td>Date of Birth:</td>

<td><input type="text" name="dateOfBirth" size="40" /></td>

</tr><hr><tr>

<td>Address Line 1:</td>

<td><input type="text" name="address1" size="40" /></td>

</tr><tr>

<td>Address Line 2:</td>

<td><input type="text" name="address2" size="40" /></td>

</tr><tr>

<td>Address Line 3:</td>

<td><input type="text" name="address3" size="40" /></td>

</tr><tr>

<td>Address Line 4:</td>

<td><input type="text" name="address4" size="40" /></td>

</tr><tr>

<td>Postcode:</td>

<td><input type="text" name="postcode" size="40" /></td>

</tr>

</table>

<br><br>

<input type="submit" value="submit" />

</body>

</html>

The form looks like this:

Next, we need to create a database. We're not going to configure it at this point - just create it. So open SQL Server Management Studio, log in as a user with sysadmin role, open a New Query window and type:

CREATE DATABASE customerInformation

GO

Now let's create some tables. We'll try and keep this to at least 1NF, meaning no duplicate values, one item per field per row and primary keys. Let's do it:

USE customerInformation

GO

CREATE TABLE personalInfo (

customerID int IDENTITY CONSTRAINT pk_Customer_ID PRIMARY KEY

firstName varchar(50), lastName varchar(50),

gender varchar(6), dateOfBirth smalldatetime

)

GO

CREATE TABLE addressInfo (

customerID int IDENTITY CONSTRAINT pk_Customer_ID PRIMARY KEY

address1 varchar(255), address2 varchar(255),

address3 varchar(255), address4 varchar(255),

postcode varchar(10)

)

GO

Seasoned DBAs reading this might be up in arms now - where are the foreign keys? What about indexes? Why am I using a surrogate key? But the scope of this article is HTML to SQL linkage, so I'll leave the topic of normalisation, optimisation and good SQL coding practice alone.

Let's create the stored procedures you'll need to load data into the database. One question you could ask now is - why use stored procedures? Given that the System.String method can hold 2^31-1 characters, why bother? The answer is security - to help prevent SQL injection, sending parameters to a stored procedure at least starts to mask the process that the SP is following (although in our example, it'll only be INSERTs anyway with no validation database-side).

CREATE PROCEDURE personalInfo$Loader (

@customerID int,

@firstName varchar(50), @lastName varchar(50),

@gender varchar(6), @dateOfBirth smalldatetime

)

AS

INSERT INTO dbo.personalInfo

VALUES(@firstName, @lastName, @gender, @dateOfBirth) -- The IDENTITY

-- attribute auto-generates customerID if NULL is passed in

GO

CREATE PROCEDURE addressInfo$Loader (

@customerID int,

@address1 varchar(255), @address2 varchar(255),

@address3 varchar(255), @address4 varchar(255),

@postcode varchar(10)

)

AS

INSERT INTO dbo.addressInfo

VALUES(@address1, @address2, @address3, @address4, @postcode) -- The IDENTITY attribute

-- auto-generates customerID if NULL is passed in

GO

Execute these CREATE statements.

Now, we need to enable IIS for your local machine. I'm assuming you're running Windows 7 but this is possible in XP and Vista too (downloads might be required). Simply go to Control Panel -> Programs and Features, then click 'Turn Windows Features on or off'. Check 'Internet Information Services' and 'Internet Information Services Hostable Web Core' and click OK. Once installed, open IIS Manager by using Start -> Run -> iis. Expand the tree in the Connections window and right-click on Sites, click Add Web Site. Now name the site 'Data Collection Form' with DefaultAppPool. Under Content Directory point the site to the path of the html page you created above (by default, sites should be in C:\Inetpub\wwwroot). Ensure the binding is http, 127.0.0.1 on port 80. Enter the host name of your local machine (local machine name). Check Start Web Site Immediately. Click OK. Now close IIS.

Ensure you have installed the .NET Framework 4.0 package from Microsoft - if you haven't, Google it and download. Once installed, open a command window, type aspnet_regiis -i and hit Enter. This will register the .NET framework with IIS and when you now open IIS, you'll see the aspnet_client folder under the Connections tree. Finally, go to the .NET Framework 4.0 root folder (normally under C:\Windows\Microsoft.NET\Framework\v4.0.30319\Config) and find web.config. Insert the following values into the config file after <location> and before <system.web> tags (at the top):

<connectionStrings>

<add name="localConn"

connectionString="Data Source=COMPNAME\NAMEDINSTANCE; Initial Catalog=DBNAME; User Id=DOMAIN\USER; Password=PASSWORD" providerName="System.Data.SqlClient" />

</connectionStrings>

Swap out CAPITAL strings above for actual values. What this will do is add a referencable connection string to the .NET framework (whether we use it or not) - we can then reference localConn as an object from ASP i.e. localConn.Open, localConn.Execute etc. This is here for completeness - we'll actually use ADO, but the choice is yours.

Save your HTML form as C:\Inetpub\wwwroot\index.html. Now go to http://127.0.0.1/index.html and you'll see your form.

Next we must create the ASPX page which will handle the data processing. It's referred to in the HTML header as send.aspx, so that's what we'll call it. Open a new text file and save it as send.aspx (in VS/Notepad). Here is the code:

<%@ Page Language="VBScript" AspCompat="true" Debug="true" %>

<%

' We WON'T use the adaptation to .NET, instead here's an alternative method using ADO:

connection = Server.CreateObject("ADODB.Connection")

connection.ConnectionString = "Data Source=COMPUTERNAME\NAMEDINSTANCE;Initial Catalog=DBNAME;User

Id='USERNAME';Password='PASSWORD';PROVIDER=SQLNCLI10"

connection.Open

Dim firstName, lastName, gender, DateOfBirth, address1, address2, address3, address4, postcode

firstName = Request.Form("firstName")

lastName = Request.Form("lastName")

' ... keep going until you get to postcode

postcode = Request.Form("postcode")

Dim personalInfoQueryString As String

Dim addressInfoQueryString As String

personalInfoQueryString = "EXECUTE dbo.personalInfo$Loader " + chr(39) + ", " + firstName + "chr(39)" _

+ ", " + lastName + chr(39) + ", " + chr(39) + gender + chr(39) + ", " + chr(39) + dateOfBirth + _

chr(39) + ";"

addressInfoQueryString = "EXECUTE dbo.addressInfo$Loader " + chr(39) + ", " + address1 + "chr(39)" _

+ ", " + address2 + chr(39) + ", " + chr(39) + address3 + chr(39) + ", " + chr(39) + address4 + _

chr(39) + ", " + chr(39) + postcode + chr(39) + ";"

connection.Execute(personalInfoQueryString)

connection.Execute(addressInfoQueryString)

response.Write("Data has been written to the database!")

connection.Close

connection = Nothing

%>

So what does it do? Firstly, it sets up an ad-hoc connection using ADO. We could have achieved this using the connection string in the .NET framework on the 'server' for more security, if we wanted to. Next, we open the connection, then define a couple of connection string objects as Strings. Then we load the values from the form using Request.Form into local variables defined as a typeless variable using Dim. Then, each string is constructed using VB.NET syntax (NOT SQL syntax!) which means that we have to specify single quotes as escape characters (hence the ASCII chr references) to avoid mis-parsing during compilation, and use the + operator for string concatenation. The _ character here is used for multi-line splits to avoid messy code. Then, we execute the string using the Execute method of the Connection object you specified. Then we close and deallocate the connection and end the code. A response is returned to the browser confirming success.

Now let's test the code. It's not unusual for newly-input code to require a debug, even when copied, so be prepared to comb your code for syntax errors, commas instead of full stops, missing apostrophes and all the other fun banana skins that development entails! Open your web form in the browser and put some sample information in. Then hit Submit. If there's a problem, debug is specified to TRUE in the ASP so you'll be able to see the stack trace and diagnose it. If not, you'll see 'Data has been written to the database.'. Go to SSMS if this is the case and SELECT * FROM dbo.personalInfo. You'll see one row returned.

This is the most basic method of linking HTML to SQL that I know. There are many more, using C#, client-side scripting, JavaScript, and other sites can tell you all about them. This method works - and is very versatile.

For example, you could add data validation. Here's a bit of code that will convert 'Yes', 'No' in different cases and in shortened forms (i.e. user types 'yes') to a bitwise (1 or 0) character. bitCollection is an object of type Microsoft.VisualBasic.Collection() which can hold variables (rather than strings, such as a string array). Preload bitCollection using Dim bitCollection As New Microsoft.VisualBasic.Collection() then bitCollection.Add(VAR_NAME), with the fields which you want converting. For the code below, imagine we have a 'Sign up for more information?' field, named (in the code) as signUpForSpam. The following code is a bit clumsy - the IFs aren't all that scalable and I could shorten the array definitions, for example - but it works:

Dim yesBoolValues(5) As String

Dim noBoolValues(5) As String

yesBoolValues(0) = "YES"

yesBoolValues(1) = "Y"

yesBoolValues(2) = "yes"

yesBoolValues(3) = "y"

yesBoolValues(4) = "1"

yesBoolValues(5) = "Yes"

noBoolValues(0) = "0"

noBoolValues(1) = "NO"

noBoolValues(2) = "N"

noBoolValues(3) = "no"

noBoolValues(4) = "n"

noBoolValues(5) = "No"

Dim yesFlag As Boolean

Dim noFlag As Boolean

Dim flag1 As Boolean

flag1 = False

yesFlag = False

noFlag = False

For Each i In bitCollection

For Each j In YesBoolValues

If (CStr(LTrim(RTrim(i))) = j) Then

yesFlag = True

End If

Next j

For Each k in NoBoolValues

If (CStr(LTrim(RTrim(i))) = k) Then

noFlag = True

End If

Next k

If ((yesFlag = False) AND (noFlag = False)) AND (flag1 = False) Then

response.write("<br><br>One or more Y/N values in the form are invalid. Please specify either Y or N.")

flag1 = True

End If

If i = Nothing Then

flag1 = True

End If

yesFlag = False

noFlag = False

Next i

For Each y In yesBoolValues

If (y = CStr(LTrim(RTrim(signUpForSpam)))) AND (flag1 = False) Then

End If

Next y

For Each n In noBoolValues

If (n = CStr(LTrim(RTrim(signUpForSpam)))) AND (flag1 = False) Then

'PUT YOUR VAR NAME HERE = 1

End If

Next n

If you're wondering what 'flag1' is, it's for encapsulating the connection.Execute commands later on. Say you didn't want to execute the query strings unless the validation passes (a reasonable assumption). You could use an IF ... THEN ... ELSE structure e.g.

If flag1 = True Then

'Connection.Execute(queryString) goes here

response.write("Successful!")

Else

response.write("Failed!")

End If

For a recent project, I used seven different types of validation and multiple flags amalgamated into a 'master flag' which encapsulated the final queryString execution - in this way, only if all validation passed would the query strings execute.

Another data validation function (courtesy of VB) - I needed a way to check that a date was valid and within a range. After an hour or so of trying to put together a string parsing function (convert date type to string; parse string, separating by /; convert split strings to int; check int within range (1-31),(1-12),(1980-2100)), I thought - there must be a better way. Here it is:

Dim fooDate As Date 'this is a throwaway value

Dim flag2 As Boolean

flag2 = False

For Each i In smalldatetimeCollection

If Not Date.TryParse(CStr(i), fooDate) AND (flag2 = False) Then

response.write("One or more dates entered are invalid. Use format DD/MM/YYYY.")

flag2 = True

End If

Next i

Again, preload smalldatetimeCollection with the fields you want to validate first.

What about checking for NULLs? Remember to correlate your NULLable values (ones that are allowed NULL, in our example it's all of them accessible to VB - the PRIMARY KEY is looked after by the DB, not accessible to VB) with the ones set in the DB. For example, in a three-column table:

EmployeeID INT UNIQUE NOT NULL, Name VARCHAR(255) NOT NULL,

Salary MONEY NOT NULL, Grade VARCHAR(2)

Grade is allowed NULLs. So notNullableCollection in the following code should contain the other three variables:

Dim flag3 As Boolean

flag1 = False

For Each i In notNullableCollection

i = CStr(i)

If (i = Nothing OR (LTrim(RTrim(Len(i) = 0)))) AND flag1 = False Then

response.write("<h2><font color=Red face=Calibri>There are mistakes in the form.</font></h2><br>")

response.write("<h3><font color=Red face=Calibri>Use the <img src=backbutton.jpg> button to return and try

again.</font></h3><br><br>")

response.write("Fields marked with * cannot be left blank.")

response.write("<br><br>")

flag3 = True

End If

Next i

Here's something more complex for checking a valid time format, HH:MM. In VB it's passed in as type String, passed out as type smalldatetime to SQL:

' checks for valid time in HH:MM in 24-hr format

Dim hoursOKFlag As Boolean

Dim minsOKFlag As Boolean

Dim flag4 As Boolean

Dim allowableHours() As String =

{"00","01","02","03","04","05","06","07","08","09","10","11","12","13","14","15","16","17","18","19","20","21","22","23"}

Dim allowableMinutes() As String =

{"00","01","02","03","04","05","06","07","08","09","10","11","12","13","14","15","16","17","18","19","20","21","22","23","24","25

","26","27","28","29","30","31","32","33","34","35","36","37","38","39","40","41","42","43","44","45","46","47","48","49","50","5

1","52","53","54","55","56","57","58","59"}

flag4 = False

For Each k In timeCollection

hoursOKFlag = False

minsOKFlag = False

k = CStr(k)

If k <> Nothing Then

Dim splitTime As String() = k.Split(New [Char]() {":"})

For Each i In allowableHours

If splitTime(0) = i Then

hoursOKFlag = True

End If

Next i

For Each i In allowableMinutes

If splitTime(1) = i Then

minsOKFlag = True

End If

Next i

If (hoursOKFlag = False OR minsOKFlag = False) AND flag4 = False Then

response.write("<br><br>Time format in one or more fields is incorrect. Use HH:MM.")

flag4 = True

End If

End If

If k = Nothing AND flag4 = False Then

response.write("<br><br>Time format in one or more fields is incorrect. Use HH:MM.")

flag4 = True

End If

Next k

The Collection to preload here is timeCollection.

String lengths can be checked using If..Then and the Len() function. Or why not try client-side scripting? Functions in the HTML can check for validity, especially with HTML 5 - there's more information from Microsoft here -> http://msdn.microsoft.com/en-us/library/yb52a4x0.aspx. Do remember that database-side validation is fine - but put some try-catch exception handling in your application code first, otherwise the database errors will be fed in a raw format to the user!

And you could try using the DataReader object too, to load the data from the database. Or use SQL Server Reporting Services to generate some BI (business intelligence - a dichotomy, perhaps?) from the gathered data.

As you can see, linking HTML code to SQL can be as easy or complex. Hopefully the method I've outlined has given you the basic idea and you will be able to experiment with different implementation methods.

And once again - have a happy New Year.